Video Preprocessing: Foundations of Semantic Search

How we prep videos for our AI-video gen assistant

A guest post from Adithya Thayyil on WAT.ai’s ClipABit team!

You have a video editor with 200 files of raw footage. You need that exact 3-second clip where someone opens the fridge. Clicking through files manually? That’s your whole afternoon gone.

We’re building ClipABit, a semantic search engine that integrates directly with video editors. Upload your footage, query with natural language (“show me fridge scenes”), get results in seconds. Still, before any embeddings or cross-attention happens, there’s a massive preprocessing problem:

1080p @ 30fps = 100 MB/minute

A typical editor’s project folder: 50+ hours of footage = 300 GB. You can’t embed every frame (that’s 5.4 million frames), you can’t store them all, and half are blurry B-roll anyway.

Preprocessing solves this! No transformers, no attention mechanisms, but it’s what makes the semantic search engine actually work.

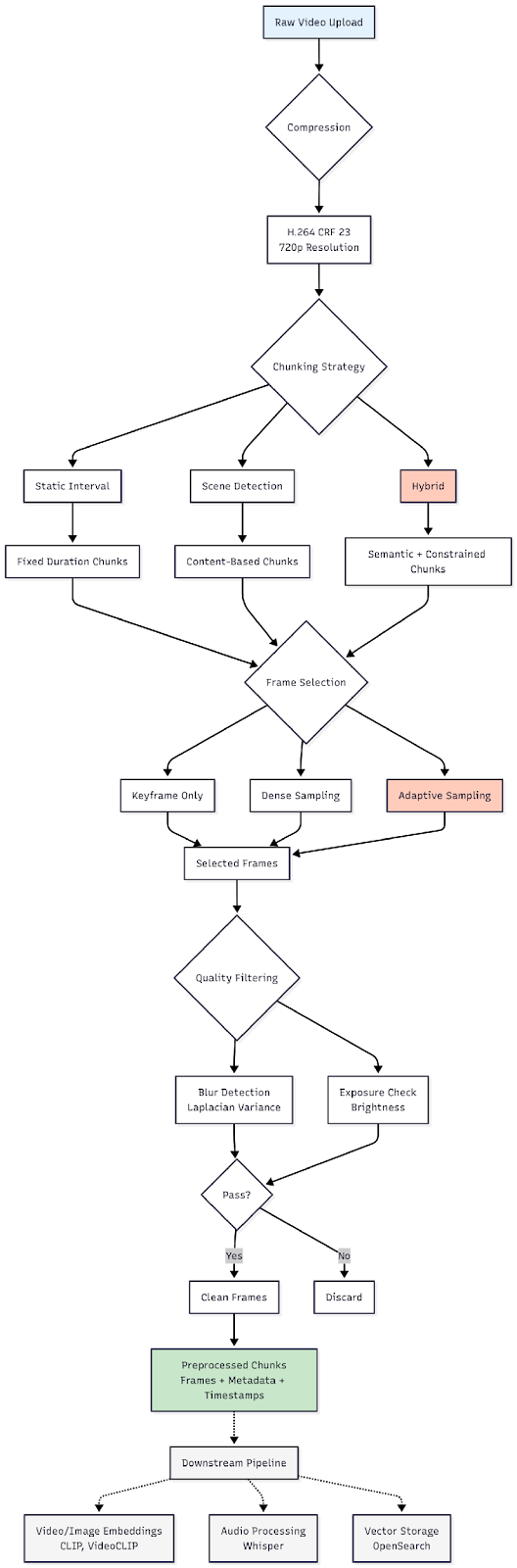

The Three Problems We Solve

Chunking: Where do moments start and end?

Video is continuous. Search needs discrete chunks. How do you split “cooking dinner” from “eating dinner”?

Approaches tried:

Static chunking (every 10 seconds): Simple but dumb. Splits activities mid-action. Your “pouring coffee” search hits two chunks: “pour—” and “—ing coffee.”

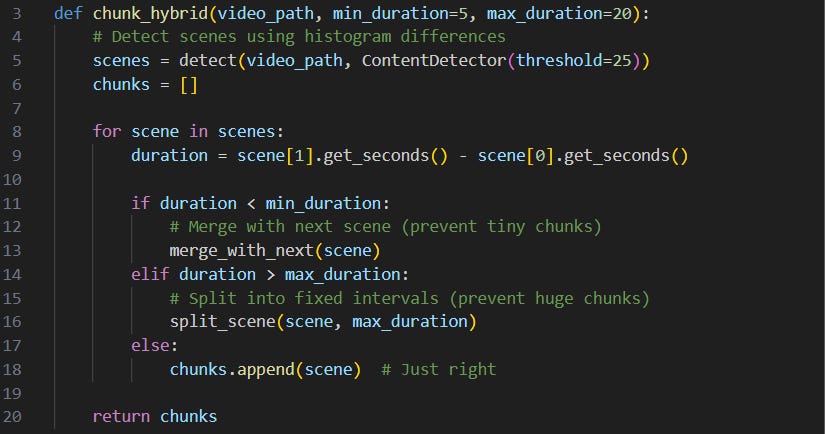

Scene detection (PySceneDetect): Uses histogram differences between consecutive frames to detect cuts. Works great for semantically meaningful boundaries but unpredictable; you get 2-second chunks and 60-second chunks. Critical for aligning chunks with actual content changes [1].

Hybrid (scene detection + constraints): Our winner. Detect scene changes but enforce 5–20 second limits. Semantic boundaries without chaos.

Result: ~20,000 semantic chunks for 100 hours, all 5–20 seconds long. Matches what VideoCLIP and similar video-text models expect [2].

Frame Selection: Which frames actually matter?

30 fps = 300 frames per 10-second chunk. If someone’s sitting still, those 300 frames are basically identical. Waste of storage and compute.

Approaches:

Dense sampling (1 frame/sec): Simple baseline, 10 frames per chunk.

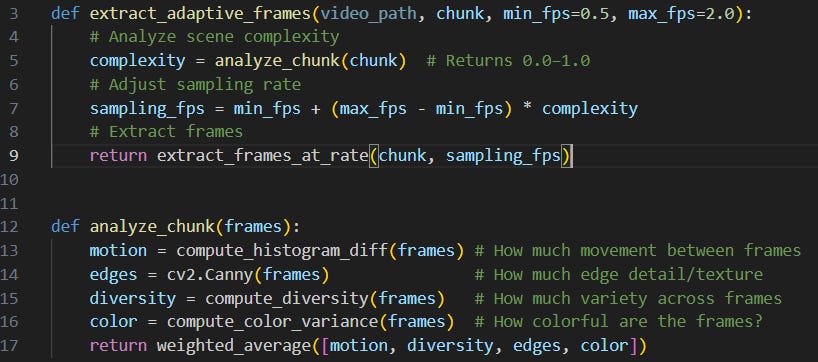

Adaptive sampling (0.5–2 fps based on complexity): Our winner. Analyze the scene: if static (sleeping), sample 0.5 fps. If dynamic (cooking), sample 2 fps.

Example: - Sleeping: complexity = 0.15 → 0.5 fps → 5 frames/10s - Cooking: complexity = 0.75 → 1.8 fps → 18 frames/10s

Result: A lot of storage reduction vs dense sampling, same search quality. Important for downstream CLIP-based video encoders [3].

Quality Filtering: Remove the junk

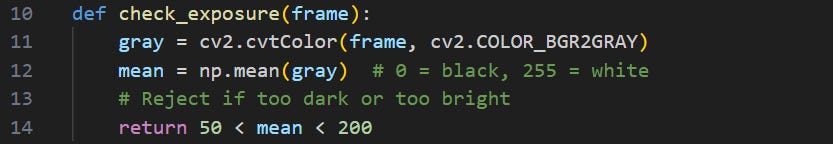

Not all frames are usable. Some are blurry, overexposed, or too dark.

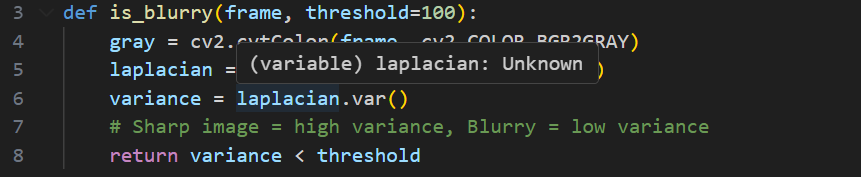

Blur detection (Laplacian variance):

Exposure check (mean brightness):

Result: Filter out ~19% of extracted frames (blurry, bad exposure, low contrast).

Production Pipeline

flow chart of pipeline

Try It Yourself!

We have an interactive demo :)

You can:

Upload your own videos —Test preprocessing on real footage (mp4, avi, mov, mkv)

Compare chunking strategies — See how static intervals vs scene detection vs hybrid affect your content

Experiment with frame selection — Toggle between keyframe, dense, and adaptive sampling to find the sweet spot

Visualize the pipeline — Interactive timeline shows exactly which frames get selected from each chunk

Or run it locally:

git clone https://github.com/ClipABit/preproc-research.git

uv sync

uv run streamlit run app.pyReferences

[1] PySceneDetect: Intelligent Scene Detection for Videos

Brandon Castellano. GitHub Repository. https://github.com/Breakthrough/PySceneDetect

Open-source tool for automatic scene boundary detection using content-aware algorithms (histogram differences, adaptive thresholding).

[2] VideoCLIP: Contrastive Pre-Training for Zero-shot Video-Text Understanding

Xu et al. EMNLP 2021.

https://arxiv.org/abs/2109.14084

Demonstrates effective video-text alignment using 5-20 second temporal chunks with sparse frame sampling for efficient multimodal learning.

[3] CLIP: Learning Transferable Visual Models From Natural Language Supervision

Radford et al. OpenAI, 2021.

https://arxiv.org/abs/2103.00020

Foundation model for image-text understanding. Frame selection strategies optimize the trade-off between coverage and computational efficiency for CLIP-based video encoders.